What is Prompt Management?

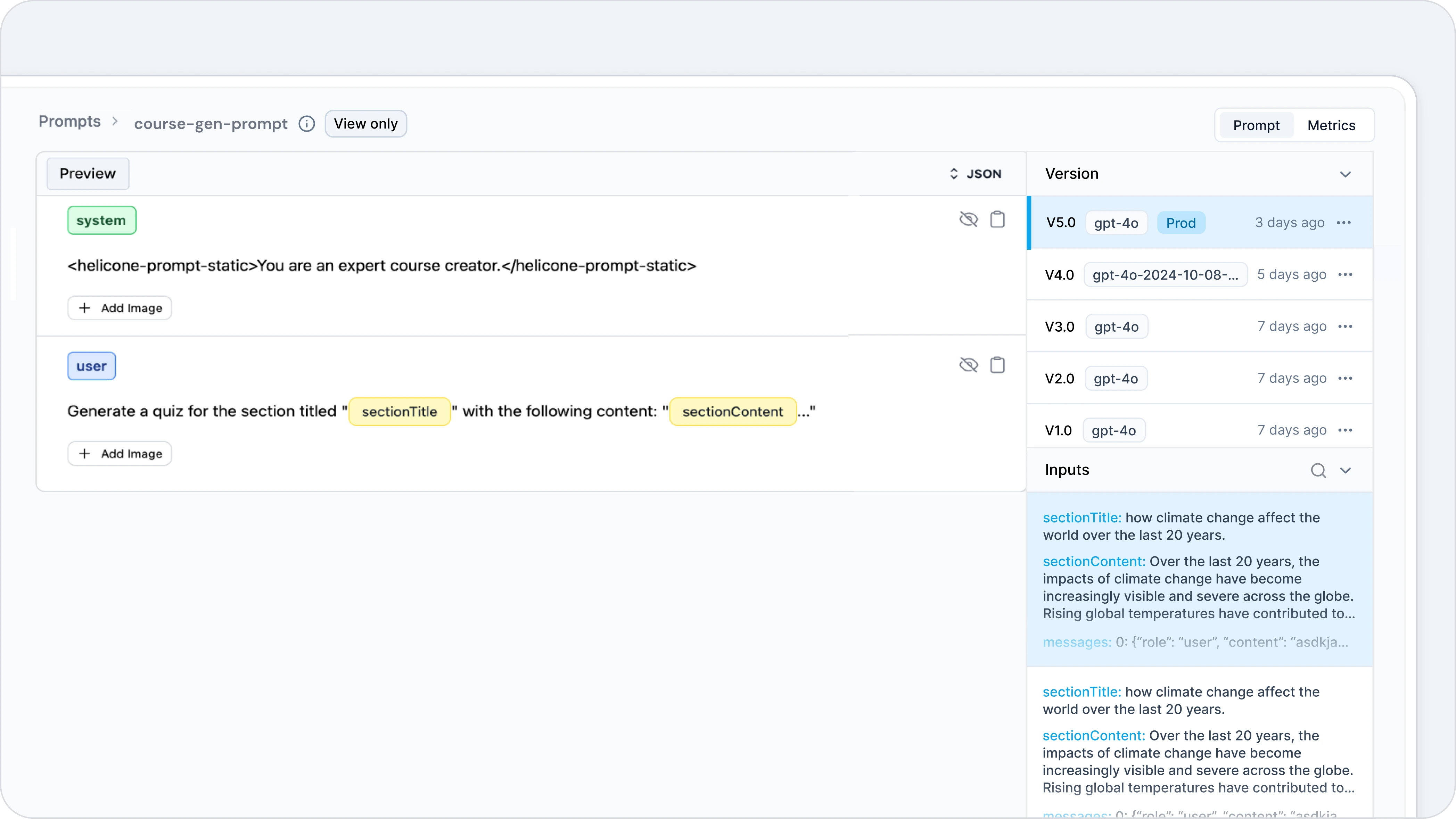

Helicone’s prompt management provides a seamless way for users to version, track and optimize their prompts used in their AI applications.

Why manage prompts in Helicone?

Once you set up prompts in Helicone, your incoming requests will be matched to ahelicone-prompt-id, allowing you to:

- version and track iterations of your prompt over time.

- maintain a dataset of inputs and outputs for each prompt.

- generate content using predefined prompts through our Generate API.

Quick Start

Prerequisites

Please set up Helicone in proxy mode using one of the methods in the Starter Guide.Not sure if proxy is for you? We’ve created a guide to explain the difference

between Helicone Proxy vs Helicone Async

integration.

Create prompt templates

As you modify your prompt in code, Helicone automatically tracks the new version and maintains a record of the old prompt. Additionally, a dataset of input/output keys is preserved for each version.- Typescript / Javascript

- Python

- Packageless (cURL)

Add `hpf` and identify input variables

By prefixing your prompt with Then, you can use it like this:The

hpf and enclosing your input variables in an additional {}, it allows Helicone to easily detect your prompt and inputs. We’ve designed for minimum code change to keep it as easy as possible to use Prompts.Static Prompts with hpstatic

In addition tohpf, Helicone provides hpstatic for creating static prompts that don’t change between requests. This is useful for system prompts or other constant text that you don’t want to be treated as variable input.To use hpstatic, import it along with hpf:hpstatic function wraps the entire text in <helicone-prompt-static> tags, indicating to Helicone that this part of the prompt should not be treated as variable input.Change input name

To rename your input or have a custom input, change the key-value pair in the passed dictionary to the string formatter function:Put it together

Let’s say we have an app that generates a short story, where users are able to input their owncharacter. For example, the prompt is “Write a story about a secret agent”, where the character is “a secret agent”.

- Typescript / Javascript example

- Python example

- Packageless (cURL) example

Run experiments

Once you’ve set up prompt management, you can use Helicone’s Experiments feature to test and improve your prompts.Local testing

Many times in development, you may want to test your prompt locally before deploying it to production and you don’t want Helicone to track new prompt versions.- Typescript / Javascript

- Python

To do this, you can set the

Helicone-Prompt-Mode header to testing in your LLM request. This will prevent Helicone from tracking new prompt versions.FAQ

How can I improve my LLM app's performance?

How can I improve my LLM app's performance?

Improving your LLM app primarily revolves around sophisticated prompt engineering. Here are some key techniques to optimize your prompt:

- Be specific and clear.

- Use structured formats.

- Leverage role-playing.

- Implement few-shot learning.

Does Helicone own my prompts?

Does Helicone own my prompts?

Helicone does not own your prompts. We simply provide a logging and observability platform that captures and securely stores your LLM interaction data. When you use our UI, prompts are temporarily stored in our system, but when using code-based integration, prompts remain exclusively in your codebase. Our commitment is to offer transparent insights into your LLM interactions while ensuring your intellectual property remains completely under your control.

How does the version indexing work?

How does the version indexing work?

In Helicone, you may notice that some of your prompt versions are labeled as

V2.0, while others are labeled V2.1. Major versions (i.e., V1.0, V2.0, V3.0) are your prompts in production. Minor versions (V1.1, V1.2, V1.3) are experiment versions that are created in Helicone, which were forked off of your production version V1.0 in this example.Need more help?

Need more help?

Additional questions or feedback? Reach out to

help@helicone.ai or schedule a

call with us.