Helicone leverages Cloudflare Workers, which run code instantly across the globe on Cloudflare’s global network, to provide a fast and reliable proxy for your LLM requests. By utilizing this extensive network of servers, Helicone minimizes latency by ensuring that requests are handled by the servers closest to your users.Documentation Index

Fetch the complete documentation index at: https://docs.helicone.ai/llms.txt

Use this file to discover all available pages before exploring further.

How Cloudflare Workers Minimize Latency

Cloudflare Workers operate on a serverless architecture running on Cloudflare’s global edge network. This means your requests are processed at the edge, reducing the distance data has to travel and significantly lowering latency. Workers are powered by V8 isolates, which are lightweight and have extremely fast startup times. This eliminates cold starts and ensures quick response times for your applications.Benchmarking Helicone’s Proxy Service

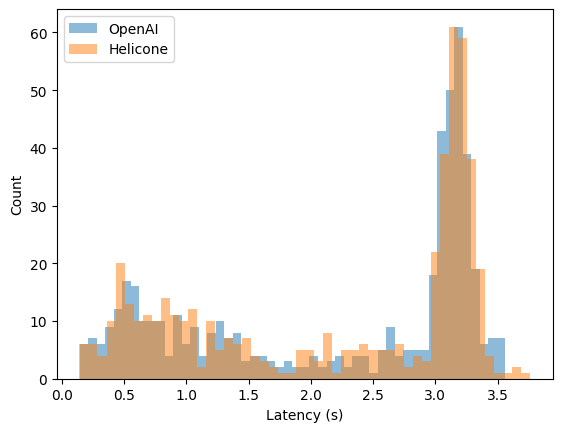

To demonstrate the negligible latency introduced by Helicone’s proxy, we conducted the following experiment:- We interleaved 500 requests with unique prompts to both OpenAI and Helicone.

- Both received the same requests within the same 1-second window, varying which endpoint was called first for each request.

- We maximized the prompt context window to make these requests as large as possible.

- We used the

text-ada-001model. - We logged the roundtrip latency for both sets of requests.

Results

| Statistic | OpenAI (s) | Helicone (s) |

|---|---|---|

| Mean | 2.21 | 2.21 |

| Median | 2.87 | 2.90 |

| Standard Deviation | 1.12 | 1.12 |

| Min | 0.14 | 0.14 |

| Max | 3.56 | 3.76 |

| p10 | 0.52 | 0.52 |

| p90 | 3.27 | 3.29 |

FAQ

Need more help?

Need more help?

Additional questions or feedback? Reach out to

help@helicone.ai or schedule a

call with us.